Calculating Machines

|

The abacus is a wooden frame having beads on rods. The beads are moved up or down to add, subtract, multiply or divide. The abacus has been used for thousands of years to do simple arithmetic. Different styles of this calculating machine has been used in Japan and Russia.

A rectangular calculator with a movable center slide is the slide rule. The slide is moved back and forth to multiply, divide, or raise numbers to powers. The slide rule performs complex arithmetic. This device, although once commonly found throughout the United States, is no longer manufactured nor used. |

In the late 1800's, the adding machine was invented by William Seward Burroughs (1857-1898) to help with business related calculations. Originally these machines were mechanical, meaning non-electronic. Numbers were entered by one key for each digit. Then a large crank would be pulled forward to calculate the total.

|

Herman Hollerith invented a tabulating machine counted information stored on a series of punch cards. This device was used successfully in the 1890 U.S. Census. In 1924, Hollerith's company changed its named to International Business Machines or IBM.

The electronic handheld calculator contains a tiny circuit board called the "microprocessor chip." When they were invented in the 1970's, calculators cost several hundred dollars each. Calculators are now more powerful at a fraction of that price. |

The Father of Computing

|

English mathematician and inventor Charles Babbage (1792-1871) has been called the Father of Computing because he was the first person to imagine a machine that could would be programed to run a set of step-by-step directions.

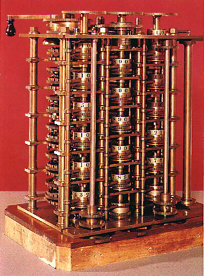

Babbage designed a calculator to print mathematical tables used in business and navigation. This machine he called the Difference Engine. Before completing this machine, Babbage planned the Analytical Engine, the first programmable device. His invention was to be capable of solving any mathematical problem programmed into it. The Analytical Engine's design included an input device, memory, processing unit, and printer similar to current computers. |

|

Designed before electricity, the Analytical Engine was to be driven by a steam engine. Problems would be entered using thick cards containing holes to represent commands, a technique developed by Joseph-Marie Jacquard for his cloth making looms. Punch cards such as these were used until the 1970s. To output the answer, Babbage devised a printing machine decades before the invention of the typewriter.

At a dinner party in November, 1834, Ada Byron, the Countess of Lovelace (1815-1852), heard of Babbage's work on the Analytical Engine. Interested in the topic, Lady Lovelace developed a friendship with Babbage. Several years later, she developed a plan for solving a mathematical problem on his machine and this is now considered the first computer program ever written. The Analytical Engine is now recognized as the first general purpose computer ever designed- even though it was never built! |

Despite years of work, Babbage lacked the technology required to complete his visionary machine. Only portions of the Analytical Engine that were built after Babbage's death still exist today.

World War II

|

During World War II, the Polish secret service captured a German Enigma machine used for coding secret messages by the Nazi military.

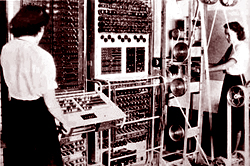

The device was sent to Bletchley Park in England for study. Scientists, such as Alan Turing (portrait), had to invent an electronic device to break the Enigma code. That machine, called the Colossus, began to successfully decode German communications in December 1943. The Colossus, a special purpose machine, could not be easily programmed to perform other operations. |

However, the machine's inventors pioneered new technologies used in the computers that were to follow shortly. During the war, ten of these machines were build. Many scholars believe Colossus was vital in the winning of World War II.

ENIAC: The First Electronic Computer

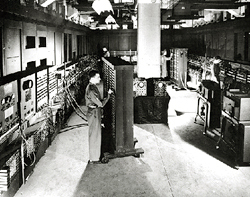

The world's first electronic digital computer was named the Electronic Numerical Integrator and Computer, or ENIAC. John Mauchly and J. Presper Eckert developed the ENIAC at the Moore School of Electrical Engineering in Pennsylvania. From 1946 to 1955 the ENIAC calculated ballistic tables and weather forecasts.

|

The ENIAC contained 18,000 vacuum tubes of 16 different types. The ENIAC weighed approximately 60,000 pounds and had a length of almost 100 feet.

To enter a new program, the ENIAC had to be rewired. Data was inputted and results outputted using IBM punch cards. On average, the ENIAC would run for 5.6 hours before making errors due to failed vacuum tubes. This first generation computer was capable of performing 5,0000 calculations per second, a small fraction of the speed of today's computers. |

The ENIAC was officially unveiled to the public on Valentine's Day, February 14, 1946. Articles and press releases describing the device appeared in popular magazines such as Newsweek.

|

At the first demonstration of an electronic computer at work, the ENIAC multiplied the number 97,367 by itself five thousand times in less than a second. The event was a major news story in 1946.

Nearly every computer today uses the so-called von Neumann architecture, named for mathematician John von Neumann, of storing the program that it is running as did the ENIAC. The ENIAC, which continued working until October 2, 1955, is the great-grandfather of today's smaller, cheaper, faster personal computers. A portion of the huge ENIAC is presently on display at the Smithsonian Institution in Washington, D.C. |

Four Generations of Computers

During the twentieth and twenty-first centuries, electronics have evolved through four generations of development, each increasingly smaller, more powerful and less expensive.

|

First Generation: 1940 - 1956

Vacuum tubes measured several inches in length and were made of glass. They gave off heat and burnt out often. Early computers had to have burnt vacuum tubes regularly replaced, and they had to be kept cool. First generation computers such as the ENIAC were enormous in size and required an abundance of energy. |

|

Second Generation: 1956 - 1963

In 1947, Bell Telephone Laboratories invented transistors. Transistors worked like the vacuum tubes, but were smaller and not made of glass. The transistors did not burn out or use a great deal of electricity. Computers having transistors were smaller in size than those of the first generation. Unfortunately, transistors frequently broke off their circuit boards and could not be easily replaced. |

|

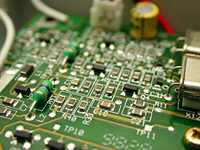

Third Generation: 1963 - 1971

Texas Instruments developed the integrated circuit (IC) that combined many electronic components onto a single silicon disk in 1958. Earlier generations of electronics replied up individually installed vacuum tubes or transistors. The integrated circuit generated less heat to damage the computer's sensitive internal machinery and required less electricity. The computers of the 1950's and 60's continued to shrink in size but still looked like large pieces of furniture. |

|

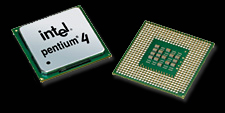

Fourth Generation: 1971 - Present

In 1970, the microprocessor chip was developed. The "chip" is a complete circuit on a single integrated circuit about as large as a postage stamp. Each chip contains several million switches similar to vacuum tubes. About the size of a quarter, today's most advanced chips are capable of performing trillions (1,000,000,000,000's) of arithmetic calculations per second. |

With the creation of the chip came a wave of new inventions: digital watch, electronic calculator, video cassette recorder (VCR), cable television, compact disk (CD) player, video game machine, cellular telephone, pager, digital audio player, DVD recorder and microcomputer.

Intel manufactures the Pentium processor that drives Microsoft Windows based computers, while Apple Macintosh computers have a microprocessor developed by Apple, IBM and Motorola. The type of circuitry that makeup computers- as well as electronic devices such as cellular phones, wrist watches, digitial audio players, DVD or Blu-Ray players, anti-lock brakes, calculators, remote controls and digitial cameras- continue to evolve and develop.

The Personal Computer

As a result of the microprocessor chip, computers continued to shrink in size. The first personal computer, or microcomputer, was the Altair 8800 (photo) by Micro Instrumentation and Telemetry Systems (MITS) in 1975. This computer had to be assembled from a kit before it could be used.

|

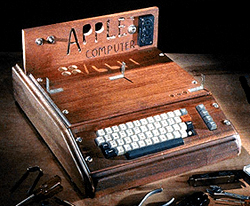

By the following year, several fully assembled computers were available commerically: the TRS 80 Model I was manufactured by Tandy (Radio Shack); The PET (Personal Electronic Transactor) was produced by Commodore; and the Apple I was made by Apple. These machines were simply a monitor, keyboard and cassette tape player for the storage of programs and data.

In 1980, Seagate Technology introduced the ST-506, the first hard disk drive designed for microcomputers. That drive stored 5 megabytes of computer software and data, compared with over 250,000 megabyte (250 gigabyte) storage commonly found on today's hard disk drives. |

Although International Business Machines (IBM) had manufactured many types of equipment, including large main frame computers, the first IBM personal computer (photo) was not produced until 1981. Once it did, though, the microcomputer industry grew very rapidly because of the IBM's influence in the business world. Today, leading manufacturers of personal computers include Dell, Compaq, Hewlett-Packard and Gateway, and Microsoft is the largest manufacturer of software.

The Internet

|

In 1969, the US Department of Defense Advanced Research Projects Administration (ARPA) developed ARPAnet to link research laboratory computers around the nation.

With this military network, researchers were able to instantaneously send messages around the world. ARPAnet gradually grew as more and more sites were added, including colleges and universities. |

The first email message was sent in 1971 by Ray Tomlinson. Previously it was only possible to send messages to users on a single computer. Tomlinson's contribution was inventing an addressing system using the now familiar @ symbol to identify the receiving computer.

The name ARPAnet was changed to Internet, meaning communication "between networks," in 1983.

To find and use information on the Internet was difficult, requiring the user to learn many complex commands. For this reason, only people who were very knowledgeable about computers used the Internet.

The name ARPAnet was changed to Internet, meaning communication "between networks," in 1983.

To find and use information on the Internet was difficult, requiring the user to learn many complex commands. For this reason, only people who were very knowledgeable about computers used the Internet.

|

Tim Berners-Lee, working at the European Particle Physicals Laboratory (known by its French acronym, CERN), in Geneva, Switzerland created a new system for communicating on the Internet. In 1991, he replaced the existing commands with hypertexts, or (hyper)links, so the user needed to merely click on a word or phrase in order to search for information.

The following year, a college student at the University of Illinois named Marc Andreessen (portrait) created a new type of program, called a web browser. Using Berners-Lee's concepts of links, Andreessen added point and click graphics to make using the Internet even easier. His program, named Mosaic, was innovative and successful. |

And so, the World Wide Web (WWW) was born. Today, Internet Explorer by the Microsoft Corporation is the most popular web browser. Although email and the World Wide Web are the most popular portions of the Internet, other available services include newsgroup, listserv, gopher, FTP, archie, IRC, MUD, instant messenger and weblog.

Computer History Gallery

A showcase of accompanying photographs and illustrations is offered as well.